Important linux commands

The article gives insight into the most important commands

System basic commands

| Scenarios | Commands |

|---|---|

| Inspect the host name | hostname |

| Inspect the host name and version | uname -a |

| Inspect the Linux information | lsb_release -a |

| Inspect the CPU information | cat /proc/cpuinfo |

| Inspect the memory | free -m |

| Inspect the user | 1. w2. who 3. whoami 4. tty |

| Inspect the hard drive | 1. df -h 2. df -aTh 3. du -h --max-depth=1 . |

| Inspect the ports | 1. netstat -ano | findstr 7001 2. sudo netstat -tunlp 3. netstat -aonp | grep 5050 |

| Inspect the firewall | sudo iptables -L -n |

| Inspect the dns to host and port status | nmap -Pn -p 27018 google.com.au |

| Inspect the path env for current user | echo $PATH |

| Inspect the process by pid | tasklist | findstr 23416 |

| Inspect the process by name | ps -ef | grep nginx |

| Kill the process by pid | taskkill -f -pid 9788 |

| Curl and save to txt | curl 130.220.209.90:9000 --trace-ascii dump.txt |

| Get a root shell | 1. sudo -s 2. sudo bash -c su - |

| Clean Linux temp files | sudo rm -rf /var/log/* |

| Clean Linux temp files cursor and processes | 1. sudo lsof | grep delete 2. sudo kill * |

| MacOS kill process using specific port | 1. lsof -i tcp:8443 2. kill 86166 |

| Clean Linux swap and move into memory then restart and modify threshold | 1. sudo swapoff -a && sudo swapon -a 2. sudo sysctl vm.swappiness=10 3. sudo vi /etc/sysctl.conf -> vm.swappiness=10 |

File commands

| Scenarios | Commands |

|---|---|

| Find a file with the given name under current path | find . -name "*" |

| Find a archive file containing the given xml file | find . -name "*.jar" | xargs grep "*.xml" |

| Find a file containing the given content under current path | 1. grep -s -r "*" ./ 2. find . -name "*.xml" | xargs grep "*" |

| Find a file containing the given content under current path and only print the file name to the output file | find . -name "*.*" | xargs grep -ri "APXMTMRR" -l > grep_APXMTMRR.log |

| Find the top size files | 1. du -a . | sort -n -r | head -n 10 2. find . -type f -size +20000k -exec ls -lh {} \; | awk '{ print $9 ": " $5 }' |

| List the folders' size in current folder | du -h --max-depth=1 . |

| List the latest changed files under current folder | ls -lrth |

| Find the files under specific folder and append the timestamp | find /var -maxdepth 2 -type d -exec stat -c "%n %y" {} \; |

| Paste text with # in the VIM and keep the format | :set paste |

| Zip folder to a file | sudo tar -zvcf /data/bak.tar.gz /data/db |

| Unzip file to a folder | sudo tar -zvxf /data/bak.tar.gz |

| Copy file to remote by scp | scp agriculture-platform/rebuild.sh ec2-user@130.220.209.90:/home/ec2-user/agriculture-platform/ rebuild.sh |

| Copy Folder to remote by scp | scp -r agriculture-platform ec2-user@130.220.209.90:/home/ec2-user |

| Copy file from remote to local by scp | 1. scp -i sshkey googlecloud@test.com:/home/googlecloud/test/keystore.p12 . 2. scp -i "~/sshkey.pem" -r ec2-user@test.com:/mnt/data data-test |

| Clean the content in a file | sed -i "d" Dockerfile |

Software commands

| Scenarios | Commands |

|---|---|

| Install software by yum | 1. sudo yum -y install docker 2. sudo yum install java-1.8.0-openjdk.x86_64 |

| Uninstall software by yum | sudo yum -y remove docker |

| List installed software by yum | 1. yum list installed | grep docker 2. yum -y list docker* |

| Search software by yum | yum search java | grep 'java-' |

| Install software by apt-get | 1. apt-get update 2. apt-get install vim |

| List installed software by RPM | rpm -qa | grep docker |

Service commands

| Scenarios | Commands |

|---|---|

| Restart vsftpd | systemctl restart vsftpd |

| Restart nginx | /usr/nginx/sbin/nginx -s reload |

| Check nginx configuration | /usr/nginx/sbin/nginx -t |

JVM commands

| Scenarios | Commands |

|---|---|

| Inspect JVM default heap flag | java -XX:+PrintFlagsFinal -version | grep HeapSize |

| Inspect the java process arguments | jinfo -flags 1 |

| Inspect the java process GC information | jmap -heap 1 |

| List java process | jps |

| List the basic class memory information by pid | jmap -histo 1 | head |

| List the class loaders memory information by pid | jmap -clstats 1 |

| Dump the java process heap information by pid | jcmd 1 GC.heap_dump heap_dump.hprof |

Scenarios

Maven and SpringBoot commands

#Generate project

mvn archetype:generate \

-DgroupId=com.alibaba.test \

-DartifactId=tutorial1 \

-Dversion=1.0-SNAPSHOT \

-Dpackage=com.alibaba.test.tutorial1 \

-DarchetypeArtifactId=archetype-test-quickstart \

-DarchetypeGroupId=com.alibaba.test.sample \

-DarchetypeVersion=1.0 \

-DinteractiveMode=false

#Build project without testing

mvn clean package -Dmaven.test.skip=true

#Run SpringBoot with specific profile

java -Dspring.profiles.active=dev -Xms256m -Xmx1024m -jar calculate-1.0.jar

mvn spring-boot:run -Drun.profiles=dev

mvn spring-boot:run -Drun.jvmArguments="-Dspring.profiles.active=dev"

#Run in debug mode

java -agentlib:jdwp=transport=dt_socket,server=y,address=5050,suspend=y -jar calculate-1.0.jar

mvn spring-boot:run -Drun.jvmArguments="-Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=y,address=5005”

mvn spring-boot:run -Drun.jvmArguments="-Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=n,address=5005 -Dspring.profiles.active=dev"

#Create SpringBoot project using start.spring.io

curl https://start.spring.io/starter.zip -d dependencies=web,data-jpa,devtools,h2 -d groupId=au.com.frankie -d artifactId=example -d name=example -d description="Spring Boot Example Application" -d baseDir=example -o myapp.zip && unzip myapp.zip && rm -f myapp.zip

SSH from server1 to server2 without password

#Generate private/public key in server1, and copy the public key

ssh-keygen -t rsa

sudo cat ~/.ssh/id_rsa.pub

#Append the public key to authorized keys in server2

vi ~/.ssh/authorized_keys

#SSH from server1 to server2:

ssh ec2-user@130.220.209.90

#Allow or deny IPs: (block the ssh request or not)

sudo vi /etc/hosts.deny

#(remove the client IP)

sudo systemctl restart sshd.service

Change Timezone(Ubuntu)

date -R

sudo timedatectl set-timezone Australia/Brisbane

timedatectl

sudo timedatectl set-local-rtc 1

sudo timedatectl set-local-rtc 0

Modify the fstab system file to automatically mount a volume after rebooting

sudo vi /etc/fstab

# modify the content as bellow

/dev/vdb /mnt/data auto defaults,nofail,comment=cloudconfig 0 2

#reboot the instance

Establish the tunnel from local port to online website by ngrok (the header setting is because of angular app Invalid Host Header issue)

ngrok http 4200 -host-header="localhost:4200"

ngrok http --host-header=rewrite 4200

Pandoc usage

pandoc -s README.md -o README.docx -M title:architecture #(markdown -> docx)

pandoc --pdf-engine=xelatex README.md -o README.pdf #(markdown -> pdf)

Recover rm -rf files

#Install extundelete

sudo yum search extundelete

sudo yum install extundelete.*

#Find filesystem and type(support ext3/ext4) to recover

df -T

#Find nodes and node to recover:

sudo extundelete --inode 2 /dev/vda1

sudo extundelete --inode 393217 /dev/vda1

#Recover:

sudo extundelete --restore-inode 542480 /dev/vda1

#Recover all deleted after time

sudo yum search extundelete

sudo yum install extundelete.*

df -T

sudo umount /dev/vdc

date -d "2018-06-02 23:00:00" +%s

sudo extundelete /dev/vdc --after 1527980400 --restore-all

sudo cp -R /data .

sudo mount /dev/vdc /data -t auto

sudo cp -R ./data/* /data/

HTTPS certificate

#Generate key

keytool -genkey -alias tomcat -storetype PKCS12 -keyalg RSA -keysize 2048 -keystore keystore.p12 -validity 3650

#Install certbot and certbot-auto

git clone https://github.com/certbot/certbot

cd certbot

./certbot-auto

./certbot-auto --help

#Generate certificates and a private key

#Remember to stop springboot process first

sudo ./certbot-auto certonly -a standalone -d test.com -d www.test.com

#Convert PEM files to PKCS12 files

sudo -s

cd /etc/letsencrypt/live/test.com

openssl pkcs12 -export -in fullchain.pem -inkey privkey.pem -out keystore.p12 -name tomcat -CAfile chain.pem -caname root

#copy keystore.p12 file to ~/agriculture-platform folder, exit su, then start springboot process

#Update HTTPS key(test.com)

cd ~/certbot

docker stop agriculture

sudo ./certbot-auto certonly -a standalone -d test.com

sudo -s

cd /etc/letsencrypt/live/test.com

openssl pkcs12 -export -in fullchain.pem -inkey privkey.pem -out keystore.p12 -name tomcat -CAfile chain.pem -caname root

cp keystore.p12 /home/ec2-user/agriculture-platform/

exit

cd ~/agriculture-platform/

vi Dockerfile #modify the date to recopy the key

. rebuild.sh

#Generate generic HTTPS key(*.test.com)

./certbot-auto certonly -d *.test.com --manual --preferred-challenges dns --server https://acme-v02.api.letsencrypt.org/directory

./certbot-auto certonly -d *.cloud.lava.xin --manual --preferred-challenges dns --server https://acme-v02.api.letsencrypt.org/directory

#create txt record in dns

#Generate p12 by crt and key

openssl pkcs12 -export -name alpha -in alpha_v2.crt -inkey alpha.key -out alpha.p12

AWS volume

#Extend volume

lsblk #Check if the volume need to extent in Linux

df -h

sudo growpart /dev/xvda 1 #xvda1 in lsblk result means change the partition 1 here

lsblk

sudo resize2fs /dev/xvda1

df -h

#Mount/Unmount volume

lsblk

df -h

sudo mount /dev/xvdf1 /data

sudo umount /dev/xvdf1

df -h

NectarCloud volume

#ttach and mount volume(/dev/vdc)

sudo fdisk -l

sudo mkfs.ext4 /dev/vdc

sudo mount /dev/vdc /data -t auto

sudo mount /dev/vdd /var/lib/docker -t auto

sudo chmod -R 777 /data

sudo umount /dev/vdd

#Increase the volume size(/dev/vdc)

sudo umount /dev/vdc

sudo e2fsck -f /dev/vdb

sudo resize2fs /dev/vdc

sudo mount /dev/vdc /data -t auto

Singularity in HPC

#MongoDB

singularity pull docker://mongo:3.4

singularity shell --bind data:/data mongo_3.4.sif

singularity instance start mongo_3.4.sif mongodb

mongod &

mongo

db

LVM management

#Install lvextend

yum whatprovides */lvextend

sudo yum install lvm2

#Enlarge the / disk

df -h

sudo lvextend -L +50G /dev/vda1

Openstack API

#Install openstack client in Ubuntu

sudo apt update

sudo apt-get install python-pip python-dev

sudo apt install python-openstackclient

#Replace the queue with Queue

#...

##import queue

#import Queue as queue

#...

vi /home/ubuntu/.local/lib/python2.7/site-packages/openstack/utils.py

vi /home/ubuntu/.local/lib/python2.7/site-packages/openstack/cloud/openstackcloud.py

openstack server list

MongoDB operation

#Authentication

mongo --port 27017 -u "database" -p "password" --authenticationDatabase "admin"

use admin

db.auth('database','password')

#Clear plan cache

db.StandardCases.getPlanCache().clear()

#Inspect database/collection size

db.stats()

db.BackupDatas.stat()

#Check aggregation plan

db.StandardCases.aggregate([

{ $match: { datasetId: "5a6fddf188fba650b83b94c9", validated: true, "timestamp.minuteStr": { $lte: "2018-09-09 00:00", $gte: "2017-12-31 00:00" } } },

{ $project: { date: "$timestamp.minuteStr", toStat: 1 } },

{ $group: { _id: "$date", toStat: { $push: "$toStat" } } },

{ $sort: { _id: 1 } }

], {cursor: {}, allowDiskUse: true, explain: true})

#Check aggregation execution plan

db.StandardCases.explain("executionStats").aggregate([

{ $match: { datasetId: "5a6fddf188fba650b83b94c9", validated: true, "timestamp.minuteStr": { $lte: "2018-09-09 00:00", $gte: "2017-12-31 00:00" }, "filter.Tunnels_to_survey~Plants_to_survey~Variety": { $in: [ "Eureka" ] } } },

{ $project: { date: "$timestamp.minuteStr", toStat: 1 } },

{ $group: { _id: "$date", toStat: { $push: "$toStat" } } },

{ $sort: { _id: 1 } }

])

Crontab service

#Start/stop service

sudo /sbin/service crond start

sudo /sbin/service crond stop

sudo /sbin/service crond restart

sudo /sbin/service crond reload

#Current services

crontab -l

#Edit services

crontab -e

#Delete services

crontab -r

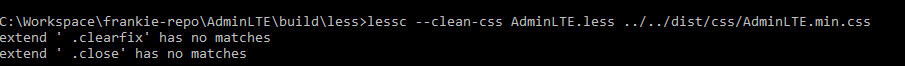

Less commands

#less generate min.css

lessc --clean-css AdminLTE.less ../../dist/css/AdminLTE.min.css

Git commands

#Git clone

git clone https://hustakin@github.com/hustakin/***.git

#Configure new git remote url and push

git remote add origin https://hustakin@github.com/hustakin/***.git

git push --set-upstream origin master

#Modify git remote url

git remote set-url origin https://hustakin@github.com/hustakin/***.git

git remote -v

#Find the size of git repository

git count-objects -v

#Prune all of the reflog references from this point back (unless you’re explicitly only operating on one branch) and repack the repository

git -c gc.auto=1 -c gc.autodetach=false -c gc.autopacklimit=1 -c gc.garbageexpire=now -c gc.reflogexpireunreachable=now gc --prune=all

#Push all your changes back to the Bitbucket repository

git push --all --force && git push --tags --force

#Force push the local to remote

git push -u origin master -f

MongoDB commands

#Find by id in mongoldb compass

{"_id":{"$oid":"5973048f0b30d6ed2104ad9d"}}

#start/shutdown commands:

mongod -f /etc/mongodb.conf

mongod --shutdown -f /etc/mongodb.conf

#MongoDB on mac

#The databases are stored in the /usr/local/var/mongodb/ or /usr/local/opt/mongodb/ directory

#The mongod.conf file is here: /usr/local/etc/mongod.conf

#The mongo logs can be found at /usr/local/var/log/mongodb/

#The mongo binaries are here: /usr/local/Cellar/mongodb/[version]/bin

Install tools

Maven

wget http://www.strategylions.com.au/mirror/maven/maven-3/3.5.4/binaries/apache-maven-3.5.4-bin.tar.gz

tar xvf apache-maven-3.5.4-bin.tar.gz

sudo mv apache-maven-3.5.4 /usr/local/apache-maven

# Add the env variables to your ~/.bashrc file

export M2_HOME=/usr/local/apache-maven

export M2=$M2_HOME/bin

export PATH=$M2:$PATH

source ~/.bashrc

mvn -version

rm -rf apache-maven-3.5.4-bin.tar.gz

Ping and telnet

apt-get update

yes | apt-get install iputils-ping

yes | apt-get install telnet

Grunt

#Install plugin to grunt and update the package.json dependency

npm install grunt --save-dev (for testing framework, like grunt..)

npm install grunt --save (for production purpose.)